How HGC-Net Differs from Today’s Interpretability Approaches

Interpretability in deep learning has evolved significantly over the past decade. From post-hoc explanation methods to c...

Read ArticleThoughts on Software Architecture, Artificial Intelligence, and the future of Development.

Interpretability in deep learning has evolved significantly over the past decade. From post-hoc explanation methods to c...

Read ArticleOne of the most persistent problems in deep learning is not performance it is opacity. Modern models can classify, predi...

Read ArticleDeep learning models have achieved remarkable success across a wide range of tasks, particularly in computer vision. How...

Read ArticleMost software systems are designed with one implicit assumption:someone will always be there to maintain them.Teams will...

Read ArticleMost software systems don’t fail because of bugs. They fail because of invisible dependency.Contracts are consumed...

Read Article Architecture

Architecture

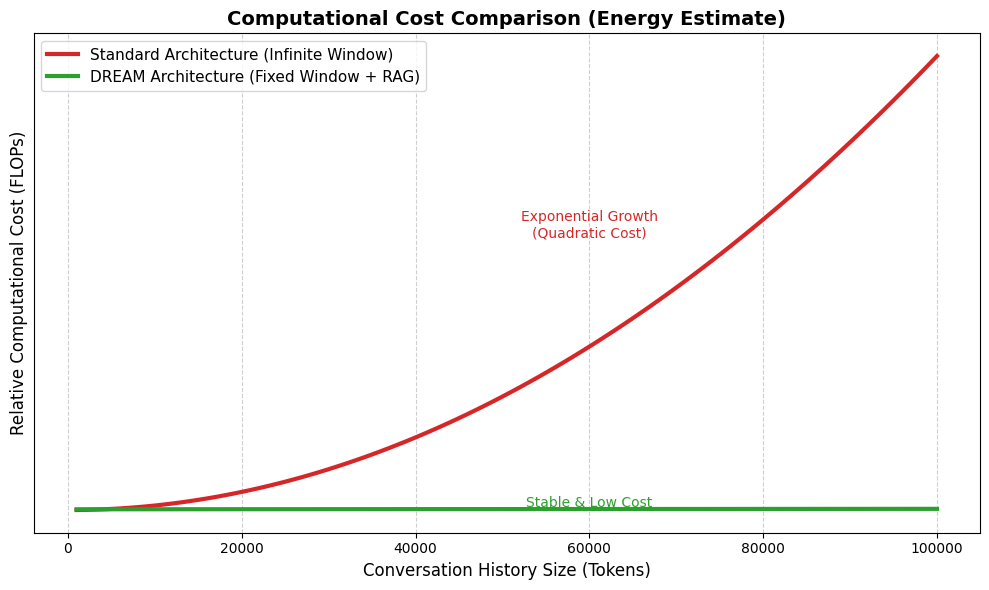

The Hidden Problem Nobody Talks About: Memory That Never ForgetsMost AI systems today suffer from the same silent flaw:&...

Read ArticleMost software systems are designed under an implicit assumption:someone will always be there to maintain them.New featur...

Read Article Architecture

Architecture

IntroductionWhen discussing artificial intelligence systems, especially those based on large language models (LLMs), ene...

Read Article CLI

CLI

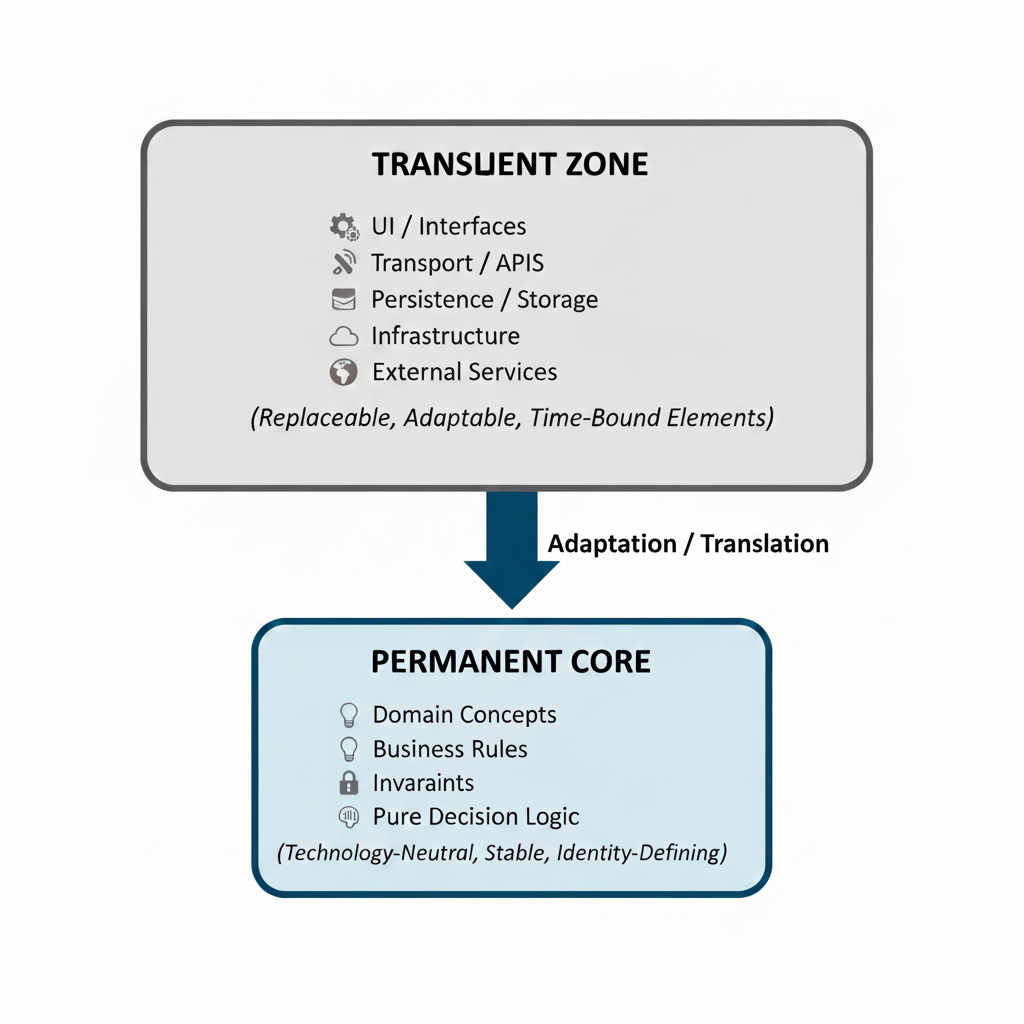

Modern software systems rarely fail because of missing features. They fail because data ownership becomes unclear,...

Read Article Architecture

Architecture

Modern software architecture is remarkably good at solving today’s problems. It is far less effective at surviving tomor...

Read Article