Introduction

When discussing artificial intelligence systems, especially those based on large language models (LLMs), energy consumption is usually treated as a hardware problem. GPUs, accelerators, and data centers are often blamed for the growing operational cost of AI.

However, there is a less visible but equally critical contributor to energy consumption: memory architecture.

Most current AI systems treat memory as an ever-growing resource. Conversations, embeddings, logs, and retrieval contexts accumulate indefinitely, and each interaction requires scanning, retrieving, or recomputing increasingly large memory spaces. This design choice has direct consequences for energy usage, even when model weights remain unchanged.

This article explores why memory architecture plays a central role in AI energy efficiency, and how adaptive, episodic memory strategies can reduce unnecessary computational and energy costs.

The Hidden Energy Cost of Memory Growth

In LLM-based systems, energy consumption is not driven only by inference. It is also driven by:

- embedding generation

- vector similarity searches

- context reconstruction

- repeated retrieval of stale or low-value information

As memory grows linearly, the cost of retrieval often grows superlinearly, depending on indexing strategy, sharding, and cache locality.

In practice, many systems fall into one of two extremes:

- Stateless systems, which discard memory and repeatedly recompute context

- Naive persistent systems, which store everything and retrieve indiscriminately

Both approaches are inefficient. The first wastes computation; the second wastes storage, retrieval bandwidth, and energy.

Why “More Memory” Is Not the Same as “Better Memory”

A common misconception is that long-term memory failures in AI systems can be solved simply by increasing context size or storage capacity.

This ignores a fundamental reality:

Energy cost scales with memory access, not just memory size.

Retrieving irrelevant or low-signal memories consumes energy without contributing meaningful cognitive value. Over time, this leads to systems that are technically persistent but energetically unsustainable.

Human cognition does not work this way. Biological memory systems rely on selective retention, decay, and episodic compression to balance continuity with efficiency. Software systems rarely implement these principles.

Episodic Retention as an Energy Optimization Strategy

An episodic memory architecture does not attempt to preserve raw interaction logs indefinitely. Instead, it operates on higher-level memory units that represent meaningful events, summaries, or behavioral patterns.

From an energy perspective, this has three key advantages:

- Reduced retrieval scope

Episodic units shrink the search space during context reconstruction. - Lower embedding churn

Fewer memory updates mean fewer embedding recomputations. - Controlled memory lifespan

Retention policies prevent unbounded growth.

In the DREAM architecture, memory retention is not static. Each episodic unit has a time-to-live (TTL) that can expand or decay based on user interaction and relevance. This adaptive retention mechanism reduces unnecessary memory access over time.

Energy Cost Is an Architectural Problem, Not Just a Hardware Problem

It is tempting to assume that future hardware improvements will solve AI energy concerns. While hardware-level optimizations are important, they cannot compensate for inefficient architectural assumptions.

If an AI system continuously retrieves large memory contexts regardless of relevance, no amount of hardware acceleration will eliminate wasted computation.

Energy efficiency must be addressed at the architectural level, where decisions about memory structure, retention, and retrieval are made.

This is why architectural choices such as:

- episodic compression

- adaptive retention

- user-scoped memory indexes

- horizontal orchestration of memory services

have direct implications for energy consumption, even before considering model optimization.

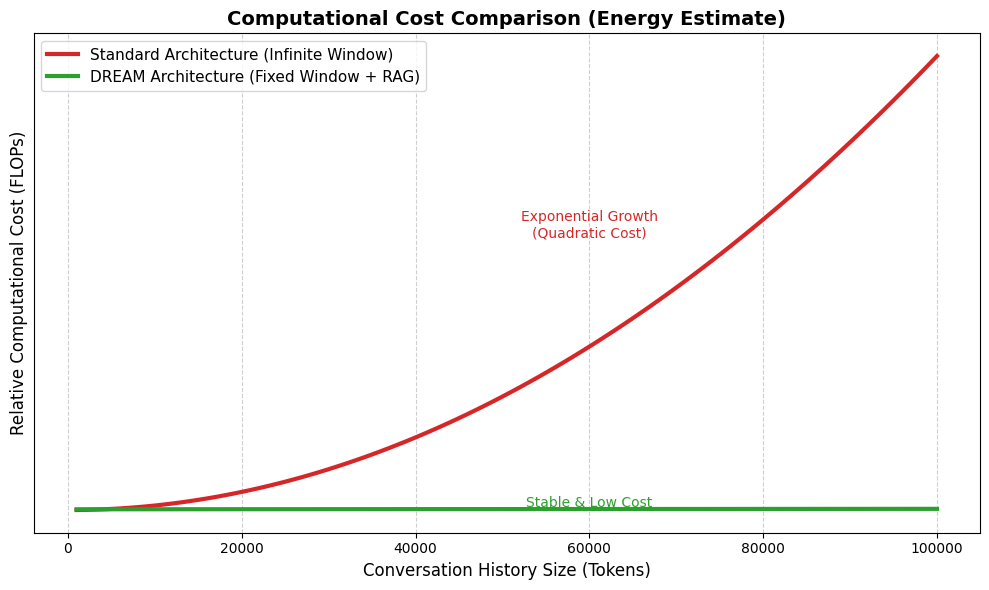

Empirical Evaluation Through Simulation

In the second version of the DREAM study, memory retention and growth behavior were evaluated through reproducible simulations. These simulations compared:

- static retention models

- unbounded memory accumulation

- adaptive episodic retention policies

The results show that adaptive retention significantly limits memory growth while preserving long-term continuity. From an energy standpoint, this translates into fewer retrieval operations, smaller search spaces, and reduced computational overhead.

While these simulations are not hardware benchmarks, they provide architectural evidence that memory-aware design decisions influence energy cost trajectories.

Toward Sustainable AI Architectures

As AI systems become more persistent, autonomous, and agent-based, energy efficiency will become a first-class design constraint.

Sustainable AI is not only about greener data centers or more efficient GPUs. It is also about how systems remember, what they choose to forget, and when memory access is justified.

Architectures that treat memory as a governed, adaptive resource offer a practical path toward reducing long-term operational cost without sacrificing cognitive continuity.

Conclusion

Long-term memory in AI systems is not free. Every stored interaction, every retrieval, and every recomputation carries an energy cost.

By reframing memory as an episodic, adaptive, and selectively retained resource, AI architectures can reduce unnecessary computation and move toward more sustainable operation.

Energy efficiency, in this context, is not a hardware afterthought—it is an architectural responsibility.

References and Further Reading

- DREAM Architecture v2.0 (Zenodo):

https://zenodo.org/records/18224436 - DREAM Framework (Reference Implementation):

https://github.com/MatheusPereiraSilva/dream-framework