Interpretability in deep learning has evolved significantly over the past decade. From post-hoc explanation methods to concept-based architectures, the field has produced a variety of tools aimed at opening the black box.

But not all interpretability approaches are built on the same assumptions and not all of them treat performance and transparency in the same way.

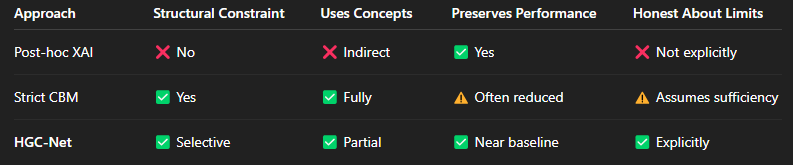

This post outlines how HGC-Net (Hybrid Guided Concept Network) differs from the dominant approaches currently used in industry and research.

1. Post-Hoc Explainability vs Structural Interpretability

Most production systems today rely on post-hoc explanation methods such as feature attribution techniques (e.g., saliency maps, SHAP, Grad-CAM). These methods attempt to explain a model after it has already made a decision.

While useful, they share a limitation:

The internal reasoning of the model remains unchanged.

Post-hoc methods do not restructure the model. They interpret behavior without constraining it. As a result, explanations may reflect correlations rather than causal structure.

HGC-Net takes a different route.

Interpretability is embedded into the architecture itself. Instead of asking “how do we explain this black box?”, it asks:

“Where should interpretability be enforced structurally?”

2. Strict Concept Bottleneck Models vs Hybrid Bottlenecks

Concept Bottleneck Models (CBMs) introduced a powerful idea: force predictions to pass through human-defined concepts. This guarantees interpretability, since every decision depends on explicitly supervised semantic features.

However, strict CBMs assume that:

- All relevant information can be expressed as human-defined concepts.

- Concept space is sufficient to represent task-relevant structure.

In real-world perceptual tasks, this assumption often fails. The result is performance degradation due to over-constraining the representation.

HGC-Net differs fundamentally here.

Instead of forcing all information through concepts, it separates internal representations into two components:

- Human-guided semantic concepts (explicit and auditable)

- Free latent dimensions (unconstrained and performance-oriented)

The final prediction uses both.

This hybrid structure preserves interpretability where human knowledge exists — without artificially compressing the rest of the representational space.

3. Industry Practice: Performance First, Explanations Later

In most applied settings, the workflow is:

- Train the highest-performing model possible.

- Add explanation tools afterward.

- Accept partial interpretability.

This works pragmatically, but it leaves a structural mismatch between the model’s internal logic and its explanations.

HGC-Net proposes a middle ground:

- Do not abandon performance.

- Do not rely solely on post-hoc explanations.

- Instead, design the representation so that part of it is explicitly accountable.

The result is not full transparency but explicitly bounded opacity.

4. Honest Interpretability

A subtle but important distinction is how explanations are handled when no known concept applies.

Many explanation systems always produce an output even if the explanation is weak or misleading.

HGC-Net does not fabricate semantic justifications.

If none of the supervised human concepts activate, the model relies on its latent component and makes this boundary visible.

Interpretability is treated as conditional, not universal.

5. What Is Truly Different?

To summarize the distinction:

Instead, it makes the black box:

- Explicit

- Isolated

- Measurable

- Structurally separated from interpretable reasoning

Closing Thoughts

The real question in interpretability is not whether a model is fully transparent. It is:

Where should transparency be enforced, and where should flexibility remain?

HGC-Net represents one possible answer: interpret what can be interpreted, constrain what should be constrained, and allow the rest to remain latent explicitly and honestly.

The full paper, experiments, and technical details are available on Zenodo:

https://doi.org/10.5281/zenodo.18508972

As interpretability continues to evolve, hybrid architectures may provide a practical path forward especially in domains where human knowledge is partial but still valuable.